Soylent Grün ist Menschenfleisch

Silicon Valley green is made of people. This is succinctly captured in the phrase: When you don’t pay for the product, the product is you. It explains how companies attain multi-billion dollar valuations despite offering their services for free. They promise revenue through the glorification of advertising.

Investors argue that high valuations reflect a company’s potential for growth. That growth comes from attracting new users. Those users in turn become targets for advertising. And sites, once bastions of clean design, become concoctions of user-generated content, ad banners, and sponsored features.

Population Growth

Sites measure their popularity by a single serving size: the user. Therefore, one way to interpret a company’s valuation is in its price per user. That is, how many calories can a site gain from a single serving? How many servings must it consume to become a hulking giant of the web?

You know where this is going.

The movie Soylent Green presented a future where a corporation provided seemingly beneficent services to a hungry world. It wasn’t the only story with themes of overpopulation and environmental catastrophe to emerge from the late ’60s and early ’70s. The movie was based on the novel Make Room! Make Room!, by Harry Harrison. And it had peers in John Brunner’s Stand on Zanzibar (and The Sheep Look Up) and Ursula K. Le Guin’s The Lathe of Heaven. These imagined worlds contained people powerful and poor. And they all had to feed.

A Furniture Arrangement

To sell is to feed. To feed is to buy.

In Soylent Green, Detective Thorn (Charlton Heston) visits an apartment to investigate the murder of a corporation’s board member, i.e. someone rich. He is unsurprised to encounter a woman there and, already knowing the answer, asks if she’s “the furniture.” It’s trivial to decipher this insinuation about a woman’s role in a world afflicted by overpopulation, famine, and disparate wealth. That an observation made in a movie forty years ago about a future ten years hence rings true today is distressing.

We are becoming products of web sites as we become targets for ads. But we are also becoming parts of those ads. Becoming furnishings for fancy apartments in a dystopian New York.

Women have been components of advertising for ages, selected as images relevant to manipulating a buyer no matter the irrelevance of their image to the product. That’s not changing. What is changing is some sites’ desire to turn all users into billboards. They want to create endorsements by you that target your friends. Your friends are as much a commodity as your information.

In this quest to build advertising revenue, sites also distill millions of users’ activity into individual recommendations of what they might want to buy or predictions of what they might be searching for.

And what a sludge that distillation produces.

There may be the occasional welcome discovery from a targeted ad, but there is also an unwelcome consequence of placing too much faith in algorithms. A few suggestions can become dominant viewpoints based more on others’ voices than personal preferences. More data does not always mean more accurate data.

We should not excuse an algorithm as an impartial oracle to society. They are tuned, after all. And those adjustments may reflect the bias and beliefs of the adjusters. For example, an ad campaign created for UN Women employed a simple premise: superimpose upon pictures of women a search engine’s autocomplete suggestions for phrases related to women. The result exposes biases reinforced by the louder voices of technology. More generally, a site can grow or die based on a search engine’s ranking. An algorithm collects data through a lens. It’s as important to know where the lens is not focused as much as where it is.

There is a point where information for services is no longer a fair trade. Where apps collect the maximum information to offer the minimum functionality. There should be more of a push for apps that work on an Information-to-Functionality relationship of minimum requested for the maximum required.

Going Home

In the movie, Sol (Edward G. Robinson) talks about going Home after a long life. Throughout the movie, Home is alluded to as the ultimate, welcoming destination. It’s a place of peace and respect. Home is where Sol reveals to Detective Thorn the infamous ingredient of Soylent Green.

Web sites want to be your home on the web. You’ll find them exhorting you to make their URL your browser’s homepage.

Web sites want your attention. They trade free services for personal information. At the very least, they want to sell your eyeballs. We’ve seen aggressive escalation of this in various privacy grabs, contact list pilfering, and weak apologies that “mistakes were made.”

More web sites and mobile apps are releasing features outright described as “creepy but cool” in the hope that the latter outweighs the former in a user’s mind. Services need not be expected to be free without some form of compensation; the Web doesn’t have to be uniformly altruistic. But there’s growing suspicion that personal information and privacy are being undervalued and under-protected by sites offering those services. There should be a balance between what a site offers to users and how much information it collects about users (and how long it keeps that information).

The Do Not Track effort fizzled, hobbled by indecision of a default setting. Browser makers have long encouraged default settings that favor stronger security, they seem to have less consensus about what default privacy settings should be.

Third-party cookies will be devoured by progress; they are losing traction within the browser and mobile apps. Safari has long blocked them by default. Chrome has not. Mozilla has considered it. Their descendants may be cookie-less tracking mechanisms, which the web titans are already investigating. This isn’t necessarily a bad thing. Done well, a tracking mechanism can be limited to an app’s sandboxed perspective as opposed to full view of a device. Such a restriction can limit the correlation of a user’s activity, thereby tipping the balance back towards privacy.

If you rely on advertising to feed your company and you do not control the browser, you risk going hungry. For example, only Chrome embeds the Flash plugin. An eternally vulnerable plugin that coincidentally plays videos for a revenue-generating site.

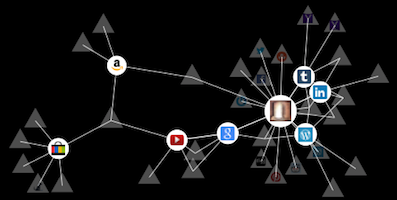

There are few means to make the browser an agent that prioritizes a user’s desires over a site’s. The Ghostery plugin is one tracking countermeasure available for all major browsers. Mozilla’s Lightbeam experiment does not block tracking mechanisms by default; it reveals how interconnected tracking has become due to ubiquitous cookies.

Browsers are becoming more secure, but they need a site’s cooperation to protect personal information. At the very least, sites should be using HTTPS to protect traffic as it flows from browser to server. To do so is laudable yet insufficient for protecting data. And even this positive step moves slowly. Privacy on mobile devices moves perhaps even more slowly. The recent iOS 7 finally forbids apps from accessing a device’s unique identifier, while Android struggles to offer comprehensive tools.

The browser is Home. Apps are Home. These are places where processing takes on new connotations. This is where our data becomes their food.

Soylent Green’s year 2022 approaches. Humanity must know.