-

John Carpenter fans know the only way you’ll escape from New York is if Snake Plissken is there to get you out. When it comes to web security, don’t bother waiting for Kurt Russell’s help. You’re on your own.

And if you’re dealing with escape characters in JavaScript strings, you’ll want to make sure your application is a maximum security environment.

Imagine an app with a search function. It takes a form field named

qand, instead of reflecting the search term in the field’s value, it updates thevalueattribute with a one-line JavaScript call. Normally, you’d expect an app to just rewrite the<input>field like so:<input id="searchResult" type="text" name="q" value="abc">It’s not necessarily a bad idea to update the element’s

valuewith JavaScript. Building HTML with string concatenation is a notorious vector for XSS. Writing the value with JavaScript might be more secure than rebuilding the HTML every time because the assignment avoids several encoding problems. This works if you’re keeping the HTML static and trading JSON messages with the server.On the other hand, if you move the server-side string concatenation from the

<input>field to a<script>tag, then you’ve shifted the XSS problem to a different vector. In our target app, the<input>field’s value was delimited with quotation marks (“). The JavaScript code uses apostrophes (‘) to delimit the string, as follows:<script> document.getElementById('searchResult').value = 'abc'; </script>Rather than strip apostrophes from the search variable’s value, the developers decided to escape them with backslashes. Here’s how it’s expected to work when a user searches for

abc'.document.getElementById('searchResult').value = 'abc\\'';Escaping the payload’s apostrophe preserves the original string delimiters, prevents the JavaScript syntax from being manipulated, and blocks HTML injection attacks – so it seems.

What if the escape is escaped? Perhaps by throwing a backslash of your own into a search term like

abc\\'.document.getElementById('searchResult').value = 'abc\\\\'';The developers caught the apostrophe, but missed the backslash. When JavaScript tokenizes the string it sees the escape working on the second backslash instead of the apostrophe. This corrupts the syntax, as follows:

// ⬇ end of string token value = 'abc\\\\''; // ⬆ dangling apostropheFrom here we just start throwing HTML injection payloads against the app. JavaScript interprets

\\as a single backslash, accepts the apostrophe as the string terminator, and parses the rest of our payload.https://web.site/search?q=abc**\\';alert(9)//**document.getElementById('searchResult').value = 'abc\\\\';alert(9)//';JavaScript’s semantics are lovely from an attacker’s perspective. Here’s an example payload using the String concatenation operator (

+) to glue thealertfunction to the value:https://web.site/search?q=**abc\\'%2balert(9)//**document.getElementById('searchResult').value = 'abc\\\\'+alert(9)//';Or we could try a payload that uses the modulo operator (

%) between the String and our alert.abc\\'%alert(9)//Maybe the developers added the

alertfunction to a denylist, e.g. a regex foralert\(, by checking for an opening parenthesis. In that case, call the function via thewindowobject’s property list. This makes it look like an innocuous string to naive regexes:abc\\'%window["alert"](9)//What happens if the denylist contained the word

alertaltogether? Build the string character by character:abc\\'window[String.fromCharCode(0x61,0x6c,0x65,0x72,0x74)](9)//By now we’ve turned an evasion of an escaped apostrophe into an exercise in obfuscation and filter bypasses. These examples focused on all the permutations of escape sequences in JavaScript strings. Check out the HIQR for more anti-regex patterns and JavaScript obfuscation techniques.

A few additional tips when defending against the payloads:

- In code reviews, be suspicious of string concatenation. Use safer methods to bind user-supplied data to HTML.

- If you create output encoding methods rather than relying on frameworks like React, make sure they match the DOM context where the data will be written.

- Normalize data before operating on it, whether this entails character set conversion, character encoding, substitution, or removal.

- Apply security checks after normalization, preferring inclusion lists over exclusion lists – it’s a lot easier to guess what’s safe than assume what’s dangerous.

Normalization is an important first step. Any time you transform data you should reapply security checks. Snake Plissken was never one for offering advice. Instead, think of The Hitchhiker’s Guide to the Galaxy and recall Trillian’s report as the Infinite Improbability Drive powers down (p. 61):

…we have normality, I repeat we have normality….Anything you still can’t cope with is therefore your own problem.

Good luck with normality and trying to correctly escape data. Security isn’t a certainty, but one thing is, at least according to Queen – there’s ”no escape from reality.”

• • • -

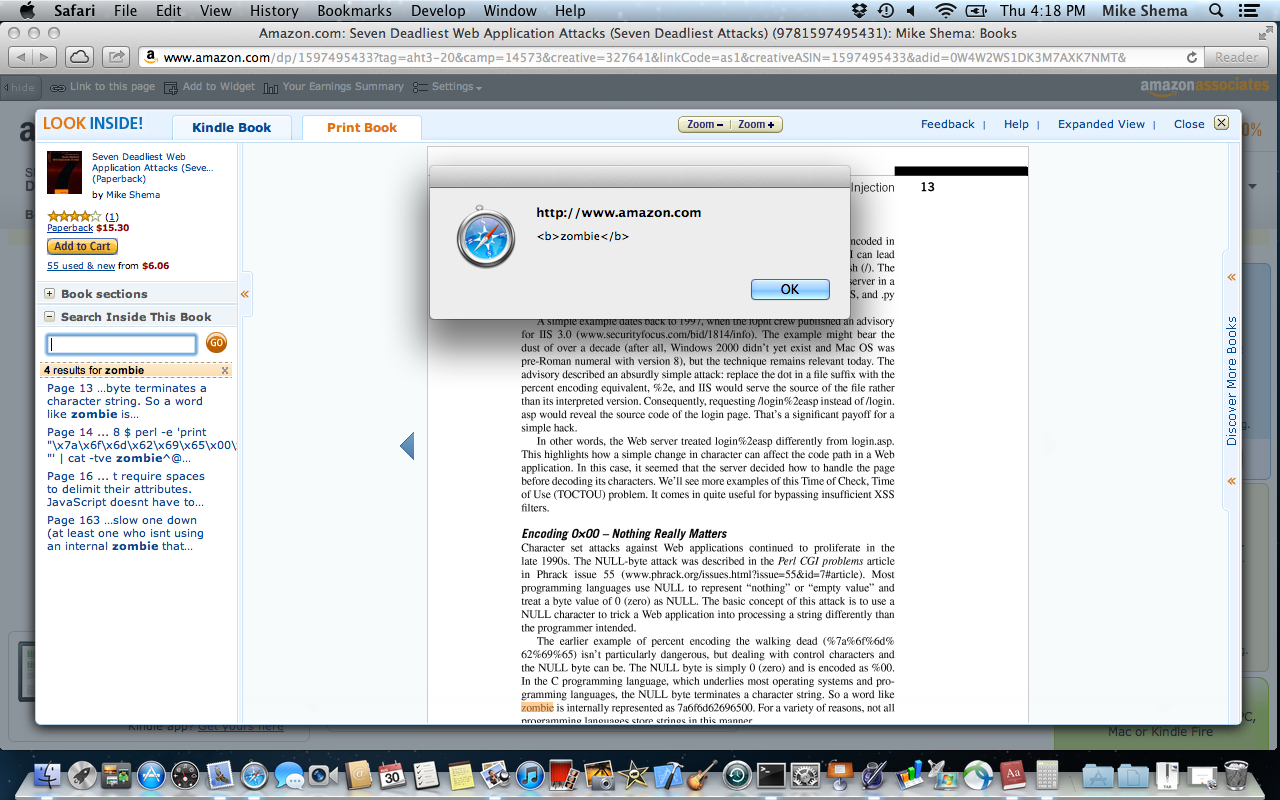

This is how the end began. Over two years ago I unwittingly planted the seeds of an undead outbreak into the pages of my book, Seven Deadliest Web Application Attacks.

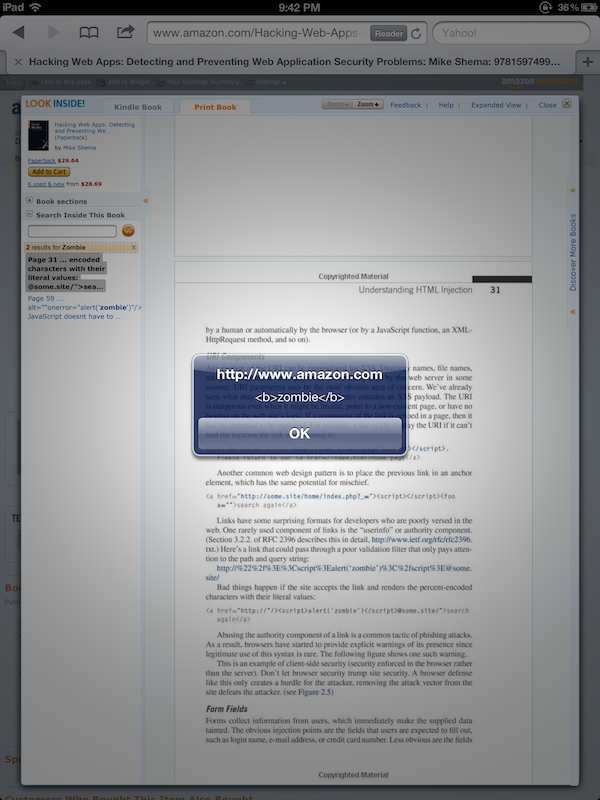

Only recently did I discover the decaying fruit of those seeds festering within the pages of Amazon. The book had been translated into Korean and I was curious about the translation of a few sentences. So, I went to check a few words in the English version, which was easy to do on Amazon:

- Visit the book’s Amazon page.

- Click on the “Look Inside!” feature. (Although apparently no longer available for this title.)

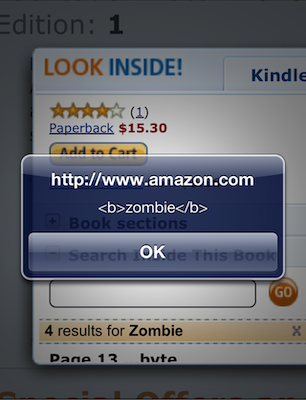

- Use the “Search Inside This Book” feature to search for zombie.

- Cower before the approaching horde of flesh-hungry brutes – or just click OK a few times.

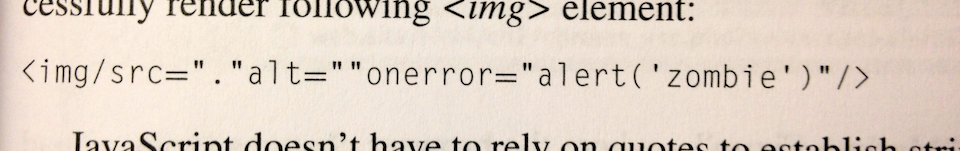

On page 16 of the book there is an example of an HTML element’s syntax that forgoes the typical whitespace used to separate attributes. The element’s name is followed by a valid token separator, albeit one rarely used in hand-written HTML. The printed text contains this line:

<img/src="."alt=""onerror="alert('zombie')"/>

The “Search Inside” feature lists the matches for a search term. It makes the search term bold (i.e. adds

<b>markup) and includes the context in which the search term was found (hence the surrounding text with the full<img/src="."alt="" />element). Then it just pops the contextual find into the list, to be treated as any other “text” extracted from the book.<img src="." alt="" onerror="alert('<b>zombie</b>')"/>Finally, the matched term is placed within an anchor so you can click on it to find the relevant page. Notice that the

<img>tag hasn’t been inoculated with HTML entities; it’s a classic HTML injection attack.<a ... href="javascript:void(0)"> <span class="sitbReaderSearch-result-page">Page 16 ...t require spaces to delimit their attributes. **<img src="." alt="" onerror="alert('<b>zombie</b>')"/>** JavaScript doesn't have to...(You can also use Google Books to see similar results, minus the XSS flaw.)

This has actually happened before. In December 2010 a researcher in Germany, Dr. Wetter, reported the same effect via

<script>tags when searching for content in different security books. He even found<script>tags whosesrcattribute pointed to a live host, which made the flaw infinitely more entertaining.

In fact, this was such a clever example of an unexpected vector for HTML injection that I included Dr. Wetter’s findings in the new Hacking Web Apps book (pages 40 and 41, the same

<img...onerror>example shows up a little later on page 59).Behold, there’s a different infestation on page 31 (see also the Google Books result). Try searching for zombie again. This time the server responds with a JSON object that contains

<script>tags. This one was harder to track down. The<script>tags don’t appear in the search listing, but they do exist in the excerpt property of the JSON object that contains the results of search queries:{...,"totalResults":2,"results":[[52,"Page 31","... encoded characters with their literal values: <a href=\"http://\"/>**<script>alert('<b>zombie</b>') </script>**@some.site/\">search again</a> Abusing the authority component of a ...", ...}I only discovered this injection flaw when I recently searched the older book for references to the living dead. (Yes, a weird – but true – reason.)

How did this happen?

One theory is that an anti-XSS filter relied on a deny list to catch potentially malicious tags. In this case, the

<img>tag used a valid, but uncommon, token separator that would have confused any filter expecting whitespace delimiters.One common approach to regexes is to build a pattern based on what we think browsers know. For example, a quick filter to catch

<script>tags or other opening tags like<iframe src...>or<img src...>might look like this – note the required space character:<\[\[:alpha:\]\]+(\\s+|>)A payload like

<img/src>bypasses that regex. The browser correctly parses its valid syntax to create an image element. Of course, thesrcattribute fails to resolve, which triggers theonerrorevent handler, leading to yet another banalalert()declaring the presence of an HTML injection flaw.The second

<script>-based example is less clear without knowing more about the internals of the site. Perhaps a sequence of stripping quotes plus poor regexes misunderstood thehrefto actually contain an authority section? I don’t have a good guess for this one.This highlights one problem of relying on regexes to parse a grammar like HTML. Yes, it’s possible to create strong, effective regexes. However, a regex does not represent the parsing state machine of browsers, including their quirks, exceptions, and “fix-up” behaviors.

Fortunately, HTML5 brings a degree of sanity to this mess by clearly defining rules of interpretation. On the other hand, web history foretells that we’ll be burdened with legacy modes and outdated browsers for years to come. So, be wary of those regexes.

Or maybe misusing regexes as parsers wasn’t the real flaw.

How did this really happen?

Well, I listen to all sorts of music while I write. You might argue that it was the demonic influence and Tony Iommi riffs of Black Sabbath that ensorcelled the book’s pages or that Judas Priest made me do it. Or that on March 30, 2010 – right around the book’s release – there was a full moon. Maybe in one of Amazon’s vast, randomly-stocked warehouses an oil drum with military markings spilled over, releasing a toxic gas that infected the books. We’ll never know for sure.

Maybe one day we’ll be safe from this kind of attack. HTML5 and Content Security Policy make more sandboxes and controls available for implementing countermeasures to HTML injection. But I just can’t shake the feeling that somehow, somewhere, there will always be more lurking about.

Until then, the most secure solution is to –

– huh, what’s that noise at the door…?

• • •

• • • -

Linked – “Be great at what you do” – In, bringing you modern social networking with less than modern password protection – like, about 1970s UNIX modern. The passwords in this dump not only rejected a robust, well-known password hashing scheme like PBKDF2, they didn’t even salt the passwords. As a historical reference, salts are something FreeBSD introduced around 1994.

It also appears some users are confused as to what constitutes a good password. Length? Characters? Punctuation? Phrases? An unfortunate number of users went for length, but neglected the shift key, space bar, or one of those numbers above qwerty.

I sat down for 20 minutes with

shasumandgrep– plus my bookshelf for inspiration – to guess some possible passwords without resorting to a brute-force dictionary crack.grep `echo -n myownpassword | shasum | cut -c6-40` SHA1.txtThe grep/shasum trick works on Unix-like command lines. John the Ripper is the usual tool for password cracking without entering the super assembly of GPU customization.

I love sci-fi and fantasy. I still run an RPG session on a weekly basis; there’s no dust on my polyhedrals. Speaking of RPGs. I started the guesswork with 1st Edition AD&D terms only to strike out after a dozen tries, but the 2nd edition references fared better:

waterdeep – Under Mountain was awesome, unlike your password.

menzoberranzan – Yeah, mister dual-scimitars shows up in the list, too. This single-handedly killed the Ranger class for me. (Er, not before I had about three rangers with dual longswords. ‘Cause that was totally different…)

No one seems to have taken “1stEditionAD&D”. Maybe that’ll be my new password – 14 characters, a number, a symbol, what’s not to love? Aside from this retroactive revelation?

tardis – Come on, that’s not even eight characters. Would tombaker or jonpertwee approve? I don’t think so. But no Wiliam Hartnell? Have you no sense of history? Even for a timelord?

doctorwho – Longer, but…um…we just covered this.

badwolf – Cool, some Jack Harkness fans out there, but still not eight characters.

torchwood – Love the show, but your anagram improves nothing.

kar120c – I’m glad there’s a fan of The Prisoner out there. It was a cool series with a mind-blowingly bizarre, pontificating, intriguing ending that demands discussion. However, not only is that password short, it even shows up in my book. I should find out who it was and send them a signed copy.

itsatrap – Seriously? You chose a cliched, meme-laden movie quote that short? And you couldn’t be bothered with an exclamation point at the end? At least you chose a line from the best of the three movies.

myprecious – Not anymore.

onering – Onering? While you were out onering your oner a password cracker was cracking your comprehension of LotR. By the way, hackers have also read earthsea, theshining and darktower. Hey, they’ve got good taste.

I adore the Dune books. Dune is near the top of my favorites. Seems I’m not the only fan:

benegesserit – Don’t they have some other quotes? Something about fear?

fearisthemindkiller – Heh, even the hackers hadn’t cracked that one yet. Referencing The Litany Against Fear would have been a nice move except that if “fear is the mind killer” then “obvious is the password.”

entersandman, blackened, dyerseve – What are you going to do when you run out of Metallica tracks? Use megadeath? It’s almost sadbuttrue. And jethrotull beat them at the Grammy’s. So, there.

loveiskind – Love is patient, love is kind, hackers aren’t stupid, passwords they find.

h4xx0r – No, probably not.

notmypassword – Actually, it is. At least you didn’t choose a 14-character secretpassword. That would just be dumb.

stevejobs – Now how is he going to change his password?

• • •