-

At the beginning of February 2017 I gave a brief talk that noted how Let’s Encrypt and cloud-based architectures encourage positive appsec behaviors. Over a span of barely three weeks, several security events seemed to undercut that thesis – Cloudbleed, SHAttered, S3 outage.

Coincidentally, those events also covered the triad of confidentiality, integrity, and availability.

So, let’s revisit that thesis and how we should view those events through a perspective of risk and engineering.

Eventually Encrypted

For well over a decade, at least two major hurdles blocked pervasive HTTPS. The first was convincing sites to deploy HTTPS in the first place and take on the cost of purchasing certificates. The second was getting HTTPS deployments to use strong configurations that enforced TLS for all connections and only used recommended ciphers.

Setting aside the distinctions between security and compliance, PCI was a crucial driver for adopting strong HTTPS. Having a requirement for transport encryption, backed by financial consequences for failure, has been more successful than asking nicely, raising awareness at security conferences, or shaming. I suspect the rate of HTTPS adoption has been far faster for in-scope PCI sites than others.

The SSL Labs project might also be a factor, but it straddles that line of encouragement through observability and shaming. It distilled a comprehensive analysis of a site’s TLS configuration into a simple letter score. The publically-visible results could be used as a shaming tactic, but that’s a weaker strategy for motivating positive change. Plus, doing so doesn’t address any of the HTTPS hurdles, whether convincing sites to shoulder the cost of obtaining certs or dealing with the overhead of managing them.

Still, SSL Labs provides an easy way for organizations to consistently monitor and evaluate their sites. This is a step towards providing help for migration to HTTPS-only sites. App owners still bear the burden of fixing errors and misconfigurations, but this tool made it easier to measure and track their progress towards strong TLS.

Effectively Encrypted

Where SSL Labs inspires behavioral change via metrics, the Let’s Encrypt project empowers behavioral change by addressing fundamental challenges faced by app owners.

Let’s Encrypt eases the resource burden of managing HTTPS endpoints. It removes the initial cost of certs (they’re free!) and reduces the ongoing maintenance cost of deploying, rotating, and handling certs by supporting automation with the ACME protocol. Even so, solving the TLS cert problem is orthogonal to solving the TLS configuration problem. A valid Let’s Encrypt cert might still be deployed to an HTTPS service that gets a bad grade from SSL Labs.

A cert signed with SHA-1, for example, will lower its SSL Labs grade. SHA-1 has been known weak for years and discouraged from use, specifically for digital signatures. Having certs that are both free and easy to rotate (i.e. easy to obtain and deploy new ones) makes it easier for sites to migrate off deprecated versions. The ability to react quickly to change, whether security-related or not, is a sign of a mature organization. Automation as made possible via Let’s Encrypt is a great way to improve that ability.

Facebook explained their trade-offs along the way to hardening their TLS configuration and deprecating SHA-1. It was an engineering-driven security decision that evaluated solutions and chose among conflicting optimizations – all informed by measures of risk. Engineering is the key word in this paragraph; it’s how systems get built.

Writing down a simple requirement and prototyping something on a single system with a few dozen users is far removed from delivering a service to hundreds of millions of people. WhatsApp’s crypto design fell into a similar discussion of risk-based engineering1. This excellent article on messaging app security and privacy is another example of evaluating risk through threat models.

Exceptional Events

Companies like Cloudflare take a step beyond SSL Labs and Let’s Encrypt by offering a service to handle both certs and configuration for sites. They pioneered techniques like Keyless SSL in response to their distinctive threat model of handling private keys for multiple entities.

If you look at the Cloudbleed report and immediately think a service like that should be ditched, it’s important to question the reasoning behind such a risk assessment. Rather than make organizations suffer through the burden of building and maintaining HTTPS, they can have a service the establishes a strong default. Adoption of HTTPS is slow enough, and fraught with error, that services like this make sense for many site owners.

Compare this with Heartbleed. It also affected TLS sites, could be more targeted, and exposed private keys (among other sensitive data). The cleanup was long, laborious, and haphazard. Cloudbleed had significant potential exposure, although its discovery and remediation likely led to a lower realized risk than Heartbleed.

If you’re saying move away from services like that, what in practice are you saying to move towards? Self-hosted systems in a rack in an electrical closet? Systems that will degrade over time and, most likely, never be upgraded to TLS 1.3? That seems ill-advised.

Does that S3 outage raise concern for cloud-based systems? Not to a great degree. Or, at least, not in a new way. If your site was negatively impacted by the downtime, a good use of that time might have been exploring ways to architect fail-over systems or revisit failure modes and service degradation decisions. Sometimes it’s fine to explicitly accept certain failure modes. That’s what engineering and business do against constraints of resource and budget.

Coherently Considered

So, let’s leave a few exercises for the reader, a few open-ended questions on threat modeling and engineering.

Flash has been long rebuked for its security weaknesses. As with SHA-1, the infosec community voiced this warning for years. There have even been one or two (ok, lots more than two) demonstrated exploits against it. It persists. It’s embedded in Chrome2, which you can interpret as a paternalistic effort to sandbox it or, more cynically, an effort to ensure YouTube videos and ad revenue aren’t impacted by an exodus from the plugin.

Browsers have had impactful vulns, many of which carry significant risk and impact as measured by the annual $50K+ rewards from Pwn2Own competitions. The minuscule number of browser vendors carries risk beyond just vulns, affecting influence on standards and protections for privacy. Yet more browsers doesn’t necessarily equate to better security models within browsers.

Approaching these kinds of flaws with ideas around resilience, isolation, authn/authz models, or feedback loops are just a few traits of a builder. They can be traits for a breaker as well, in creating attacks against those designs.

Approaching these by explaining design flaws and identifying implementation errors are just a few traits of a breaker. They can be traits for a builder as well, in designing controls and barriers to disrupt attacks.

Approaching these by dismissing complexity, designing systems no one would (or could) use, or highlighting irrelevant flaws is often just blather. Infosec has its share of vacuous and overly-ambiguous phrases like military-grade encryption, perfectly secure, artificial intelligence (yeah, I know, blah blah blah Skynet), use-Tor-use-Signal, and more.

There’s a place for mockery and snark. This isn’t concern trolling, which is preoccupied with how things are said. This is about understanding the underlying foundation of what is being said about designs – the risk calculations, the threat models, the constraints.

Constructive Closing

I believe in supporting people to self-identity along the spectrum of builder and breaker rather than pin them to narrow roles – a principle applicable to many more important subjects as well. This is about the intellectual reward of tackling challenges faced by builders and breakers alike, and discarding the blather of uninformed opinions and empty solutions.

I’ll close with this observation from Carl Sagan in The Demon-Haunted World:

It is far better to grasp the universe as it really is than to persist in delusion, however satisfying and reassuring.

Our appsec universe consists of systems and data and users, each in different orbits.

Security should contribute to the gravity that binds them together, not the black hole that tears them apart. Engineering works within the universe as it really is. Shed the delusion that one appsec solution in a vacuum is always universal.

-

Whatsapp provides great documentation on their designs for end-to-end encryption. ↩

-

In 2017 Chrome announced they’d remove Flash by the end of 2020. ↩

• • • -

-

The next time you visit a cafe to sip coffee and surf on some free Wi-Fi, try an experiment: Log in to some of your usual sites. Then, with a smile, hand the keyboard over to a stranger. Let them use it for 20 minutes. Remember to pick up your laptop before you leave.

While the scenario seems silly and contrived, it essentially happens each time you visit a site that doesn’t bother to encrypt the traffic to your browser — in other words, sites that neglect using HTTPS.

The encryption of HTTPS provides benefits like confidentiality, integrity, and identity. Your information remains confidential from prying eyes because only your browser and the server can decrypt the traffic. Integrity protects the data from being modified without your (or the site’s) knowledge. We’ll address identity in a bit.

There’s an important distinction between tweeting to the world or sharing thoughts on social media and having your browsing activity over unencrypted HTTP. You intentionally share tweets, likes, pics, and thoughts. The lack of encryption means you’re unintentionally exposing the controls necessary to share such things. It’s the difference between someone viewing your profile and taking control of your keyboard to modify that profile.

The Spy Who Sniffed Me

We most often hear about hackers attacking web sites, but it’s just as easy and lucrative to attack your browser. One method is to deliver malware or lull someone into visiting a spoofed site via phishing. Those techniques don’t require targeting a specific victim. They can be launched scattershot from anywhere on the web, regardless of the attacker’s geographic or network relationship to the victim. Another kind of attack, sniffing, requires proximity to the victim but is no less potent or worrisome.

Sniffing attacks watch the traffic to and from a victim’s web browser. (In fact, all of the computer’s traffic may be visible, but we’re only worried about web sites for now.) The only catch is that the attacker needs to be able to see the communication channel. The easiest way for them to do this is to sit next to one of the end points, either the web server or the web browser. Unencrypted wireless networks — think of cafes, libraries, and airports — make it easy to find the browser’s end point because the traffic is visible to anyone who can receive that network’s signal.

Encryption defeats sniffing attacks by concealing the traffic’s meaning from all except those who know the secret to decrypting it. The traffic remains visible to the sniffer, but it appears as streams of random bytes rather than HTML, links, cookies, and passwords. The trick is understanding where to apply encryption in order to protect your data. For example, wireless networks can be encrypted, but the history of wireless security is laden with egregious mistakes. And it’s not necessarily a sufficient solution.

The first wireless encryption scheme was called WEP. It was the security equivalent of Pig Latin. It seems secret at first. Then the novelty wears off once you realize everyone knows what ixnay on the ottenray means, even if they don’t know the movie reference. WEP required a password to join the network, but the protocol’s poor encryption exposed enough hints about the password that someone with a wireless sniffer could trivially reverse engineer it. This was a fatal flaw, because the time required to crack the password was a fraction of that needed to blindly guess the password with a brute force attack – a matter of hours (or less) instead of weeks (or centuries, as it should be).

Security improvements were attempted for Wi-Fi, but many turned out to be failures since they just metaphorically replaced Pig Latin with an obfuscation more along the lines of Klingon or Quenya, depending on your fandom leanings. The challenge was creating an encryption scheme that protected the password well enough that attackers would be forced to fall back to the inefficient brute force attack. The security goal is a Tower of Babel, with languages that only your computer and the wireless access point could understand — and which don’t drop hints for attackers. Protocols like WPA2 accomplish this far better than WEP ever did. WPA3 does even better.

We’ve been paying attention to public spaces, but the problem spans all kinds of networks. Sniffing attacks are just as feasible in corporate environments. They only differ in terms of threat scenarios. Fundamentally, HTTPS is required to protect the data transiting your browser.

S For Secure

Sites that neglect to use HTTPS are subverting the privacy controls you thought were protecting your data.

If my linguistic metaphors have left you with no understanding of the technical steps to execute sniffing attacks, rest assured the tools are simple and readily available. One from 2016 was a Firefox plugin called Firesheep. At the time, it enabled hacking for sites like Amazon, Facebook, Twitter, and others. The plugin demonstrated that technical attacks, regardless of sophistication, can be put into the hands of anyone who wishes to be mischievous, unethical, or malicious. Firesheep reduced the need for hacking skills to just being able to use a mouse.

To be clear, sniffing attacks don’t need to grab your password in order to negatively impact you. Login pages must use HTTPS to protect your credentials. If they then used HTTP (without the S) after you log in, they’re not protecting your privacy or your temporary identity.

We need to take an existential diversion here to distinguish between “you” as the person visiting a site and the “you” that the site knows. Sites speak to browsers. They don’t (yet?) reach beyond the screen to know that you are in fact who you say you are. The credentials you supply for the login page are supposed to prove your identity because you are ostensibly the only one who knows them. Sites need to maintain track of who you are and that you’ve presented valid credentials. So, the site sets a cookie in your browser. From then on, that cookie, a handful of bits, is your identity.

These identifying cookies need to be a shared secret – a value known only to your browser and the site. Otherwise, someone else could use that cookie to impersonate you.

S For Sometimes

Sadly, it seems that money and corporate embarrassment motivates protective measures far more often than privacy concerns. Many sites have chosen to implement a more rigorous enforcement of HTTPS connections called HTTP Strict Transport Security (HSTS). Paypal, whose users have long been victims of money-draining phishing attacks, was one of the first sites to use HSTS to prevent malicious sites from fooling browsers into switching to HTTP or spoofing pages. Like any good security measure, HSTS is transparent to the user. All you need is a browser that supports it (all do) and a site to require it (many don’t).

Improvements like HSTS should be encouraged. HTTPS is inarguably an important protection. However, the protocol has its share of weaknesses and determined attackers. Plus, HTTPS only protects against certain types of attacks; it has no bearing on cross-site scripting, SQL injection, or a myriad of other vulnerabilities. The security community is neither ignorant of these problems nor lacking in solutions. The lock icon on your browser that indicates a site uses HTTPS may be minuscule, but the protection it affords is significant.

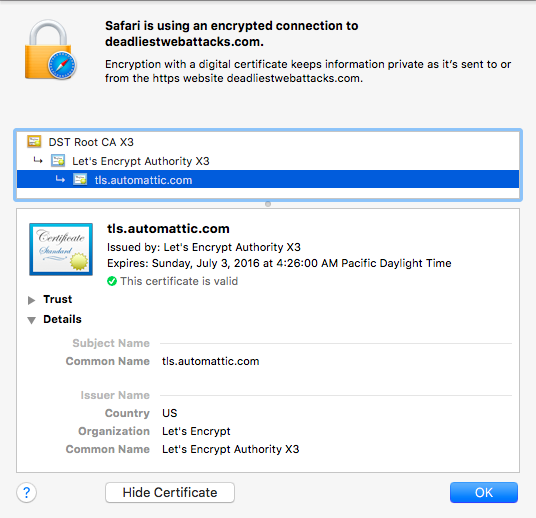

In the 2016 version of this article, the SSL Pulse noted only ~72% of the top 200K sites surveyed supported TLS 1.2, with 29% still supporting the egregiously insecure SSLv3. The Let’s Encrypt project started making TLS certs more attainable in late 2015.

In March 2023, the SSL Pulse has TLS 1.2 at ~100% of sites surveyed, with 2% stubbornly supporting SSLv3. Even better is that the survey sees 61% of sites supporting TLS 1.3. That progress is a successful combination of Let’s Encrypt, browsers dropping support for ancient protocols, and the push by HTTP/2 and HTTP/3 to have always-encrypted channels on modern TLS versions. Additionally, it reports that ~34% of sites support HSTS.

• • • -

The alphabetically adjacent domains when this site was hosted at WordPress included air fresheners, web security, and cats. Thanks to Let’s Encrypt, all of those now support HTTPS by default.

Even better, WordPress serves the Strict-Transport-Security header to ensure browsers adhere to HTTPS when visiting it. So, whether you’re being entertained by odors, HTML injection, or felines, your browser is encrypting traffic.

Let’s Encrypt makes this possible for two reasons. The project provides free certificates, which addresses the economic aspect of obtaining and managing them. Users who blog, create content, or set up their own web sites can do so with free tools. But the HTTPS certificates were never free and there was little incentive for them to spend money. To further compound the issue, users creating content and web sites rarely needed to know the technical underpinnings of how those sites were set up (which is perfectly fine!). Yet the secure handling and deployment of certificates requires more technical knowledge.

Most importantly, Let’s Encrypt addressed this latter challenge by establishing a simple, secure ACME protocol for the acquisition, maintenance, and renewal of certificates. Even when (or perhaps especially when) certificates have lifetimes of one or two years, site administrators would forget to renew them. It’s this level of automation that makes the project successful.

Hence, WordPress can now afford – both in the economic and technical sense – to deploy certificates for all the custom domain names it hosts. That’s what brings us to the cert for this site, which is but one domain in a list of SAN entries from deadairfresheners to a Russian-language blog about, inevitably, cats.

Yet not everyone has taken advantage of the new ease of encrypting everything. Five years ago I wrote about Why You Should Always Use HTTPS. Sadly, the article itself is served only via HTTP. You can request it via HTTPS, but the server returns a hostname mismatch error for the certificate, which breaks the intent of using a certificate to establish a server’s identity.

As with all things new, free, and automated, there will be abuse. For one, malware authors, phishers, and the like will continue to move towards HTTPS connections. The key point there being “continue to”. Such bad actors already have access to certs and to compromised financial accounts with which to buy them. There’s little in Let’s Encrypt that aggravates this.

Attackers may start looking for letsencrypt clients in order to obtain certs by fraudulently requesting new ones. For example, by provisioning a resource under a well-known URI for the domain (this, and provisioning DNS records, are two ways of establishing trust to the Let’s Encrypt CA).

Attackers may start accelerating domain enumeration via Let’s Encrypt SANs. Again, it’s trivial to walk through domains for any SAN certificate purchased today. This may only be a nuance for hosting sites or aggregators who are jumbling multiple domains into a single cert.

Such attacks aren’t proposed as creaky boards on the Let’s Encrypt stage. They’re merely reminders that we should always be reconsidering how old threats and techniques apply to new technologies and processes. For many ”astounding” hacks of today, there are likely close parallels to old Phrack articles or basic security principles awaiting clever reinterpretation for our modern times.

Finally, I must leave you with some sort of pop culture reference, or else this post wouldn’t befit the site. This is the 400th anniversary of Shakespeare’s death. So I shall leave you with a quote from Julius Caesar:

Nay, an I tell you that, Ill ne’er look you i’ the

face again: but those that understood him smiled at one

another and shook their heads; but, for mine own part, it

was Greek to me. I could tell you more news too: Marullus

and Flavius, for pulling scarfs off Caesar’s images, are

put to silence. Fare you well. There was more foolery

yet, if I could remember it.

May it take us far less time to finally bury HTTP and praise the ubiquity of HTTPS. We’ve had enough foolery of unencrypted traffic.

• • •